15.3.3 Theory of Nonlinear Curve FittingNLFit-Theory

How Origin Fits the Curve

The aim of nonlinear fitting is to estimate the parameter values which best describe the data. Generally we can describe the process of nonlinear curve fitting as below.

- Generate an initial function curve from the initial values.

- Iterate to adjust parameter values to make data points closer to the curve.

- Stop when minimum distance reaches the stopping criteria to get the best fit

Origin provides options of different algorithm, which have different iterative procedure and statistics to define minimum distance.

Explicit Functions

Fitting Model

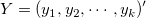

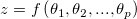

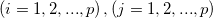

A general nonlinear model can be expressed as follows:

where  is the independent variables and is the independent variables and  is the parameters. is the parameters.

| Examples of the Explicit Function

|

Least-Squares Algorithms

The least square algorithm is to choose the parameters that would minimize the deviations of the theoretical curve(s) from the experimental points. This method is also called chi-square minimization, defined as follows:

where  is the row vector for the ith (i = 1, 2, ... , n) observation. is the row vector for the ith (i = 1, 2, ... , n) observation.

Origin provides two options to adjust the parameter values in the iterative procedure

Levenberg-Marquardt (L-M) Algorithm

The Levenberg-Marquardt (L-M) algorithm11 is a iterative procedure which combines the Gauss-Newton method and the steepest descent method.

The algorithm works well for most cases and become the standard of nonlinear least square routines.

- Compute the

value from the given initial values: value from the given initial values:  . .

- Pick a modest value for

, say , say  = 0.001 = 0.001

- Solve the Levenberg-Marquardt funciton11 for

and evaluate and evaluate

- If

,increase ,increase  by a factor of 10 and go back to step 3 by a factor of 10 and go back to step 3

- if

, decrease , decrease  by a factor of 10, update the parameter values to be by a factor of 10, update the parameter values to be  and go back to step 3 and go back to step 3

- Stop until the

values computed in two successive iterations are small enough (compared with the tolerance) values computed in two successive iterations are small enough (compared with the tolerance)

Downhil Simplex Algorithm

Besides the L-M method, Origin also provides a Downhill Simplex approximation9,10. In geometry, a simplex is a polytope of N + 1 vertices in N dimensions. In non-linear optimization, an analog exists for an objective function of N variables. During the iterations, the Simplex algorithm (also known as Nelder-Mead) adjusts the parameter "simplex" until it converges to a local minimum.

Different from L-M method, the Simplex method does not require derivatives, and it is effective when the computational burden is small. Normally, if you did not get a good value for parameter initialization, you can try this method to get the approximate parameter value for further fitting calculations with L-M. The Simplex method tends to be more stable in that it is less likely to wander into a meaningless part of the parameter space; on the other hand, it is generally much slower than L-M, especially very close to a local minimum. Actually, there is no "perfect" algorithm for nonlinear fitting, and many things may affect the result (e.g., initial values). In complicated models, you may find one method may do better than the other. Additionally, you may want to try both methods to perform the fitting operation.

Orthogonal Distance Regression (ODR) Algorithm

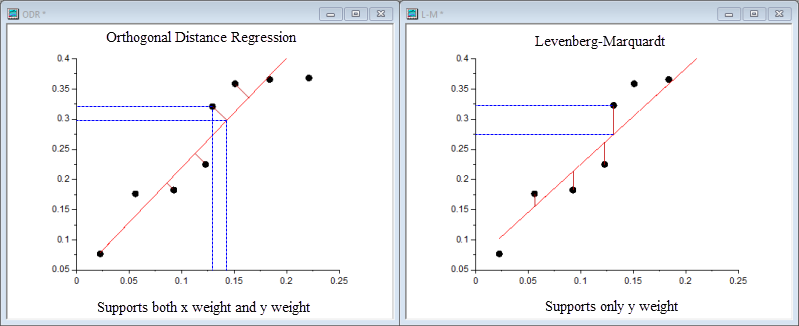

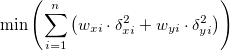

The Orthogonal Distance Regression (ODR) algorithm minimizes the residual sum of squares by adjusting both fitting parameters and values of the independent variable in the iterative process. The residual in ODR is not the difference between the observed value and the predicted value for the dependent variable, but the orthogonal distance from the data to the fitted curve.

Origin uses the ODR algorithm in ODRPACK958.

For a explict function, the ODR algorithm could be expressed as:

subject to the constraints:

where  and and  are the user input weights of are the user input weights of  and and  , ,  and and  are the residual of the corresponding are the residual of the corresponding  and and  , and , and  is the fitting parameter. is the fitting parameter.

For more details of the ODR algorithm, please refer to ODRPACK958.

Comparison between ODR and L-M

To choose the ODR or L-M algorithm for your fitting, you may refer to the following table for information:

|

|

Orthogonal Distance Regression

|

Levenberg-Marquardt

|

| Application

|

Both implicit and explicit functions

|

Only explicit functions

|

| Weight

|

Support both x weight and y weight

|

Support only y weight

|

| Residual Source

|

The orthogonal distance from the data to the fitted curve

|

The difference between the observed value and the predicted value

|

| Iteration Process

|

Adjusting the values of fitting parameters and independent variables

|

Adjusting the values of fitting parameters

|

Implicit Functions

Fitting Model

A general implicit function could be expressed as:

where  and and  are the variables, are the variables,  are the fitting parameters and are the fitting parameters and  is a constant. is a constant.

| Examples of the Implicit Function:

|

Orthogonal Distance Regression (ODR) Algorithm

The ODR method can be used for both implicit functions and explicit functions. To learn more details of ODR method, please refer to the description of ODR mehtod in above section

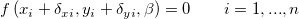

For implicit functions, the ODR algorithm could be expressed as:

subject to:

where  and and  are the user input weights of are the user input weights of  and and  , ,  and and  are the residual of the corresponding are the residual of the corresponding  and and  , and , and  is the fitting parameter. is the fitting parameter.

Weighted Fitting

When the measurement errors are unknown,  are set to 1 for all i, and the curve fitting is performed without weighting. However, when the experimental errors are known, we can treat these errors as weights and use weighted fitting. In this case, the chi-square can be written as: are set to 1 for all i, and the curve fitting is performed without weighting. However, when the experimental errors are known, we can treat these errors as weights and use weighted fitting. In this case, the chi-square can be written as:

There are a number of weighting methods available in Origin. Please read Fitting with Errors and Weighting in the Origin Help file for more details.

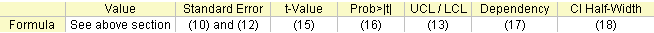

Parameters

The fit-related formulas are summarized here:

The Fitted Value

Computing the fitted values in nonlinear regression is an iterative procedure. You can read a brief introduction in the above section (How Origin Fits the Curve), or see the below-referenced material for more detailed information.

Parameter Standard Errors

During L-M iteration, we need to calculate the partial derivatives matrix F, whose element in ith row and jth column is:

where  is the error of y for the ith observation if Instrumental weight is used. If there is no weight, is the error of y for the ith observation if Instrumental weight is used. If there is no weight,  . And . And  is evaluated for each observation is evaluated for each observation  in each iteration. in each iteration.

Then we can get the Variance-Covariance Matrix for parameters  by: by:

where  is the transpose of the F matrix, s2 is the mean residual variance, also called Reduced Chi-Sqr, or the Deviation of the Model, and can be calculated as follows: is the transpose of the F matrix, s2 is the mean residual variance, also called Reduced Chi-Sqr, or the Deviation of the Model, and can be calculated as follows:

where n is the number of points, and p is the number of parameters.

The square root of the main diagonal value of this matrix C is the Standard Error of the corresponding parameter

where Cii is the element in ith row and ith column of the matrix C. Cij is the covariance between θi and θj.

You can choose whether to exclude s2 when calculating the covariance matrix. This will affect the Standard Error values. When excluding s2, clear the Use reduce Chi-Sqr check box on the Advanced page under Fit Control panel. The covariance is then calculated by:

So the Standard Error now becomes:

The parameter standard errors can give us an idea of the precision of the fitted values. Typically, the magnitude of the standard error values should be lower than the fitted values. If the standard error values are much greater than the fitted values, the fitting model may be overparameterized.

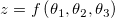

The Standard Error for Derived Parameter

Origin estimates the standard errors for the derived parameters according to the Error Propagation formula, which is an approximate formula.

Let  be the function with a combination (linear or non-linear) of be the function with a combination (linear or non-linear) of  variables variables  . .

The general law of error propagation is:

where  is the covariance value for is the covariance value for  , and , and  . .

You can choose whether to exclude mean residual variance  when calculating the covariance matrix when calculating the covariance matrix  , which affects the Standard Error values for derived parameters. When excluding , which affects the Standard Error values for derived parameters. When excluding  , clear the Use reduce Chi-Sqr check box on the Advanced page under Fit Control panel. , clear the Use reduce Chi-Sqr check box on the Advanced page under Fit Control panel.

For example, using three variables

we get:

Now, let the derived parameter be  , and let the fitting parameters be , and let the fitting parameters be  . The standard error for the derived parameter . The standard error for the derived parameter  is is  . .

Confidence Intervals

Origin provides two methods to calculate the confidence intervals for parameters: Asymptotic-Symmetry method and Model-Comparison method.

Asymptotic-Symmetry Method

One assumption in regression analysis is that data is normally distributed, so we can use the standard error values to construct the Parameter Confidence Intervals. For a given significance level, α, the (1-α)x100% confidence interval for the parameter is:

The parameter confidence interval indicates how likely the interval is to contain the true value.

The confidence interval illustrated above is Asymptotic, which is the most frequently used method to calculate the confidence interval. The "Asymptotic" here means it is an approximate value.

Model-Comparison Method

If you need more accurate values, you can use the Model Comparison Based method to estimate the confidence interval.

If the Model Comparison method is used, the upper and lower confidence limits will be calculated by searching for the values of each parameter p that makes RSS(θj) (minimized over the remaining parameters) greater than RSS by a factor of (1+F/(n-p)).

where F = Ftable(α,1,n-p)and RSS is the minimum residual sum of square found during the fitting session.

t Value

You can choose to perform a t-test on each parameter to see whether its value is equal to 0. The null hypothesis of the t-test on the jth parameter is:

And the alternative hypothesis is:

The t-value can be computed as:

Prob>|t|

The probability that H0 in the t test above is true.

where tcdf(t, df) computes the lower tail probability for Student's t distribution with df degree of freedom.

Dependency

If the equation is overparameterized, there will be mutual dependency between parameters. The dependency for the ith parameter is defined as:

and (C-1)ii is the (i, i)th diagonal element of the inverse of matrix C. If this value is close to 1, there is strong dependency.

To learn more about how the value assess the quality of a fit model, see Model Diagnosis Using Dependency Values page

CI Half Width

The Confidence Interval Half Width is:

where UCL and LCL is the Upper Confidence Interval and Lower Confidence Interval, respectively.

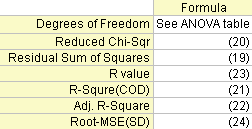

Statistics

Several fit statistics formulas are summarized below:

Degree of Freedom

The Error degree of freedom. Please refer to the ANOVA Table for more details.

Residual Sum of Squares

The residual sum of squares:

Reduced Chi-Sqr

The Reduced Chi-square value, which equals the residual sum of square divided by the degree of freedom.

R-Square (COD)

The R2 value shows the goodness of a fit, and can be computed by:

where TSS is the total sum of square, and RSS is the residual sum of square.

Adj. R-Square

The adjusted R2 value:

R Value

The R value is the square root of R2:

For more information on R2, adjusted R2 and R, please see Goodness of Fit.

Root-MSE (SD)

Root mean square of the error, or the Standard Deviation of the residuals, equal to the square root of reduced χ2:

ANOVA Table

The ANOVA Table:

Note: The ANOVA table is not available for implicit function fitting.

|

|

df

|

Sum of Squares

|

Mean Square

|

F Value

|

Prob > F

|

| Model

|

p

|

SSreg = TSS - RSS

|

MSreg = SSreg / p

|

MSreg / MSE

|

p-value

|

| Error

|

n - p

|

RSS

|

MSE = RSS / (n - p)

|

|

|

| Uncorrected Total

|

n

|

TSS

|

|

|

|

| Corrected Total

|

n-1

|

TSScorrected

|

|

|

|

Note: In nonlinear fitting, Origin outputs both corrected and uncorrected total sum of squares:

Corrected model:

Uncorrected model:

The F value here is a test of whether the fitting model differs significantly from the model y=constant. Additionally, the p-value, or significance level, is reported with an F-test. We can reject the null hypothesis if the p-value is less than  , which means that the fitting model differs significantly from the model y=constant. , which means that the fitting model differs significantly from the model y=constant.

Confidence and Prediction Bands

Confidence Band

The confidence interval for the fitting function says how good your estimate of the value of the fitting function is at particular values of the independent variables. You can claim with 100α% confidence that the correct value for the fitting function lies within the confidence interval, where α is the desired level of confidence. This defined confidence interval for the fitting function is computed as:

where:

Prediction Band

The prediction interval for the desired confidence level α is the interval within which 100α% of all the experimental points in a series of repeated measurements are expected to fall at particular values of the independent variables. This defined prediction interval for the fitting function is computed as:

where

is Reduced is Reduced

| Notes: The Confidence Band and Prediction Band in the fitted curve plot are not available for implicit function fitting.

|

Topics for Further Reading

Reference

- William. H. Press, etc. Numerical Recipes in C++. Cambridge University Press, 2002.

- Norman R. Draper, Harry Smith. Applied Regression Analysis, Third Edition. John Wiley & Sons, Inc. 1998.

- George Casella, et al. Applied Regression Analysis: A Research Tool, Second Edition. Springer-Verlag New York, Inc. 1998.

- G. A. F. Seber, C. J. Wild. Nonlinear Regression. John Wiley & Sons, Inc. 2003.

- David A. Ratkowsky. Handbook of Nonlinear Regression Models. Marcel Dekker, Inc. 1990.

- Douglas M. Bates, Donald G. Watts. Nonlinear Regression Analysis & Its Applications. John Wiley & Sons, Inc. 1988.

- Marko Ledvij. Curve Fitting Made Easy. The Industrial Physicist. Apr./May 2003. 9:24-27.

- "J. W. Zwolak, P.T. Boggs, and L.T. Watson, ``Algorithm 869: ODRPACK95: A weighted orthogonal distance regression code with bound constraints, ACM Transactions on Mathematical Software Vol. 33, Issue 4, August 2007."

- Nelder, J.A., and R. Mead. 1965. Computer Journal, vol. 7, pp. 308 -313

- Numerical Recipes in C, Ch. 10.4, Downhill Simplex Method in Multidimensions.

- Numerical Recipes in C, Ch. 15.5, Nonlinear Models.

|